1. Introduction and Purpose

This white paper outlines the vision, user needs, technical concept, and development direction for the AI-Driven Crisis Response System under the ESA Civil Security from Space (CSS) Programme. The primary focus is on leveraging satellite-based Earth Observation (EO) as the first and principal source of situational awareness in civil security incidents, supplemented by drone data, AI, and edge-based decision support systems.

The project’s aim is to support public safety agencies with real-time, accurate, and wide area situational insight, even in remote or disconnected environments. This document serves as a strategic and technical overview, incorporating insights from stakeholder interviews, workshops, and initial system design.

2. Satellite First: Strategic Priority for Breadth, Continuity, and Awareness

Earth Observation (EO) data is positioned at the core of the system architecture. Satellite data offers unmatched geographical coverage, frequent revisit cycles, and the ability to detect changes and patterns across vast terrains. In crisis response, this enables detection of:

- Lost vessels at sea and tents/shelters in the wilderness

- Moisture levels in wetlands, lakes, and riverbeds to inform lost person behavior modelling

- Hydrological estimation to support both search & rescue and wildfire response

- Hotspot detection and post-event impact mapping

The project actively stages hypotheses and use cases in collaboration with operational stakeholders and then tasks satellites accordingly. This process is unique in the civil domain and builds strong use-driven validation pipelines.

Satellite communications (SatCom) complement EO by ensuring system-wide communication continuity. Especially in areas with limited terrestrial coverage, SatCom enables edge devices and field teams to maintain contact and stream critical alerts.

3. Drones as Operational Complements

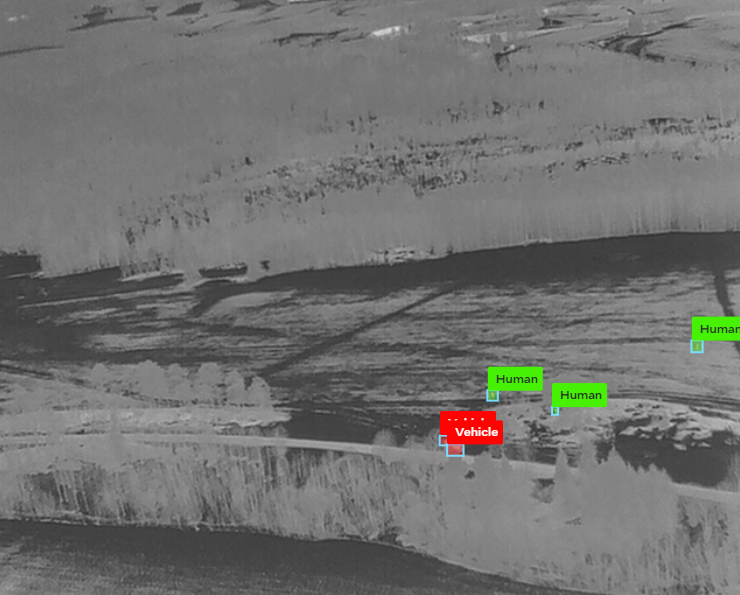

While satellites provide large-scale, top-down awareness, drones offer hyper-local detail, flexibility, and immediacy. In this system, drones are used to:

- Verify anomalies detected in satellite data (e.g., heat signatures or suspected camps)

- Deliver high-resolution imagery and thermal video to complement broader EO sources

- Assist field crews with real-time aerial perspectives in complex terrain

In disconnected areas, drones equipped with edge AI processing (EAIPS) can transmit condensed alerts via SatCom, ensuring operational relevance even without LTE.

Use cases include: – Visual and thermal confirmation of potential lost hiker campsites identified in mountain valleys – Classification of oil sheen types in fjords using drones after satellite detection – Dynamic terrain mapping to assist first responders in post-landslide scenarios.

4. Stakeholder Needs and Operational Requirements

Stakeholder interviews have revealed consistent priorities:

- Real-time information: Satellite data delivered within 12 to 24 hours; drone data delivered with less than 10 seconds latency when feasible.

- Robust usability: integration in already established operational systems, one-button startup, low training overhead

- Integrated views: combining satellite, drone, and ground information into one map, in their already existing Common Operating Picture

- Offline capability: local processing with optional SatCom fallback

- Secure and closed data environments

These insights have shaped the technical architecture and user interface strategy.

5. Technical System Overview

The system consists of three main components:

- AIDFP (AI-Driven Data Fusion Platform): integrates EO, drone, and field data into a unified operational picture, deployed in a secure location in Northern Norway

- EAIPS (Edge AI Processing Software): deployed in the field, processes video and sensor data locally, and generates alerts in near real-time

- AITAF (AI Training and Annotation Framework): enables human-in-the-loop (HITL) feedback and correction of AI models to ensure continuous learning and improvement

These components interact via secure APIs and support real-time dashboards, mobile interfaces, and system integration with national command and control (C2) infrastructures.

The EAIPS edge units are powered by ruggedized, high-performance computing modules that can run lightweight AI inference models in the field. Each unit supports onboard processing, offline functionality, and robust connectivity to maintain operational effectiveness in disconnected scenarios.

The project benefits from hardware and software support provided by Intel, which includes high-performance servers for video analytics workloads and multimodal inference, including early testing of transformer-based models on Gaudi platforms. These capabilities enable the rapid prototyping and benchmarking of multimodal AI agents in simulated and laboratory environments, ensuring future readiness for real-world deployment.

This configuration ensures that object detection, segmentation, and anomaly scoring can happen even without an upstream connection.

6. AI and Human-in-the-Loop: Trust through Collaboration

AI is applied for object detection, thermal segmentation, hotspot prediction, and change detection. Use cases include identifying tents, boats, human figures, and terrain anomalies. Human-in-the-loop (HITL) verification ensures that:

- Decisions of critical importance are always made with careful consideration.

- Experts can review and annotate flagged content

- Data bias and overfitting are identified early

To support operational usability, the HITL functionality will be deployed through a user-friendly web portal and mobile application. This interface will allow field responders, analysts, and coordinators to easily validate AI-generated outputs, provide feedback, and tag events or anomalies using tablets or smartphones. The goal is to ensure the solution is fully operational in the field, even under stress and time constraints.

Importantly, this mobile interface enables the inclusion of skilled volunteers, analysts, or retired professionals who may not otherwise be present in the field. These contributors can validate flagged findings remotely, vastly increasing the pool of available human resources in critical incidents.

Confirmed anomalies from both satellite and drone data—once verified by a human—will be geo-tagged and displayed directly on the Common Operating Picture (COP), complete with image context and confidence levels. For example, suppose a drone operator identifies a jacket that matches the description of the missing person. In that case, this observation can be flagged via HITL and automatically integrated into the COP as a high-priority finding, along with its map location and visual attachment.

This collaborative AI strategy ensures both robustness and operational trust.

7. Insights from Stakeholder Engagement

The project has engaged a broad and representative range of stakeholders from across the civil security domain, including:

- Fire and rescue agencies

- Volunteer-based search and rescue organizations

- Police and public order coordination units

- National and regional rescue coordination centers

- Satellite data and Earth Observation providers

Across these groups, there is strong support for a satellite-first approach to situational awareness, with drones providing tactical-level verification, and AI systems supporting both automation and human decision-making. All parties emphasized the importance of intuitive tools, rapid data access, human validation workflows, and seamless integration into existing command and control systems.

8. Next Steps: Hypotheses, Data Collection, and Validation

The project will initiate a structured brainstorming process among participating organizations to identify high-impact hypotheses for satellite- and drone-based situational awareness. These hypotheses will be grounded in real operational needs, such as identifying objects, changes, or conditions in complex terrain and remote regions. Once defined, the project will systematically collect relevant satellite imagery and sensor data to verify or refute each hypothesis.

Examples of potential hypotheses include:

- Detection of small tents in mountainous terrain

- Segmentation and ranking of wetlands by moisture level to support lost person behavior modeling

- Identification of drifting vessels through satellite time-series data and AI pattern recognition

Planned drone missions will validate AI findings from satellite data by gathering high-resolution imagery, thermal confirmation, and human-annotated ground truth. Each hypothesis will be treated as a distinct scenario, with meticulous logging of inputs, AI outputs, validation steps, and confirmation metrics.

Validated observations—whether from drone or satellite—will be geo-tagged and integrated into the Common Operating Picture (COP) with accompanying visuals and metadata, enabling evidence-based decision-making and strategic coordination.

This cycle of ideation, data collection, analysis, and validation ensures both scientific rigor and real-world utility.

9. Recommendations and Future Directions

- Prioritize satellite-based detection, with drone-supported confirmation

- Continue developing multimodal AI (EO + drone + human)

- Ensure HITL validation pipelines in every major use case

- Maintain edge-first, offline-capable design, with optional SatCom support

- Expand the system into a modular civil security toolset across Europe

- Integrate lightweight digital twins of critical infrastructure for real-time state synchronization

Future Deployment of Multimodal Agents on Edge

As AI models evolve, the deployment of fully multimodal agents on edge devices will become feasible. These agents will be capable of fusing visual (optical, thermal), SAR, meteorological, and geospatial textual data to make autonomous assessments in the field. For example: – An agent could receive satellite-derived flood forecasts, correlate them with drone-based water depth mapping, query local digital twin models for infrastructure vulnerabilities, and generate evacuation zone proposals in real-time.

Deploying these agents requires edge hardware that supports: – Efficient transformer architectures or GNNs (graph neural networks) – On-device LLM fine-tuning or inference – Local sensor fusion with asynchronous inputs – Digital twin state management and synchronization – Model containerization via Docker/K3s for reliability.

This vision will gradually be integrated into the EAIPS roadmap, ensuring compatibility with advancing processor platforms from Intel and the broader edge AI ecosystem.

10. Appendices

Further documentation is available on request.